Disclosure: HappyHorse 1.0 is an unreleased model. This article is based on publicly available information from third-party reports. GoIMG is not affiliated with the HappyHorse team.

A Mystery Model Storms the Arena

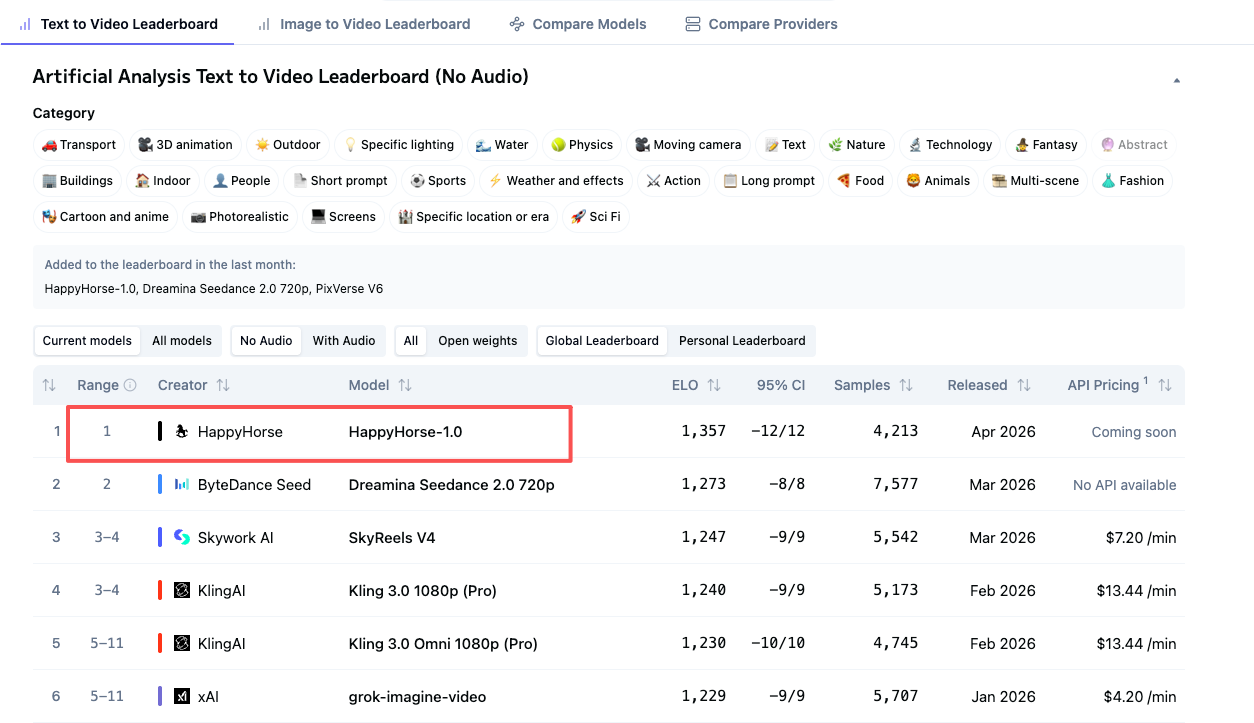

On April 7, 2026, an unknown AI video model named HappyHorse-1.0 quietly appeared on the Artificial Analysis Video Arena leaderboard — and within hours, it had displaced every established player in both the text-to-video and image-to-video categories.

No press release. No team announcement. No GitHub repository. Just a name, an Elo score around 1333, and a cryptic landing page that read "coming soon."

By April 8, it was gone.

The Numbers That Shocked the Industry

In blind voting on the Artificial Analysis Arena, HappyHorse-1.0 reportedly outperformed:

- Seedance 2.0 (ByteDance)

- Kling 3.0 (Kuaishou)

- PixVerse V6

- And several closed-source frontier models

For an unannounced model from an unknown team to top the leaderboard against billion-dollar labs, the industry had only one reaction: who the hell built this?

What We Know

From the limited public information and the brief landing pages that surfaced:

| Spec | Reported Value |

|---|---|

| Architecture | Single-stream 40-layer Transformer |

| Parameters | ~15 billion |

| Output | Native 1080p, text-to-video and image-to-video |

| Audio | Joint audio-video generation, 7-language lip-sync |

| Inference | DMD-2 distilled, 8 denoising steps, ~38s per 1080p clip |

| License | Claimed open source with commercial rights |

If verified, those specs would put HappyHorse roughly on par with — or slightly ahead of — anything currently shipping.

Then It Vanished

Just as suddenly as it appeared, HappyHorse-1.0 disappeared from the Arena leaderboard. The "coming soon" links remained "coming soon." No weights were uploaded. No API was opened. No team has claimed it.

What's left is a small handful of screenshots, a few archived benchmark snapshots, and an open question that has the entire AI video research community speculating.

Why This Matters

A handful of independent observers have noted patterns suggesting HappyHorse may be a stealth release from an established Chinese lab — possibly tied to Sand.ai and the Shanghai Innovation Institute, based on language ordering on the official site (Mandarin and Cantonese listed before English) and benchmark fingerprints that closely match an internally-named model called daVinci-MagiHuman.

But until weights or an API actually drop, everything is speculation.

What's Next

For the rest of us trying to make AI video today, the practical answer is unchanged: use what's actually shipping. Seedance 2.0 remains the most capable production-ready model with native audio-video generation, multi-shot output up to 15 seconds, and cinematic camera control — and it's available right now on the Happy Horse video generator.

If HappyHorse ever releases properly, the leaderboard story will get more interesting. Until then, it's vapor.

Want the Deeper Analysis?

- ⚔️ HappyHorse 1.0 vs Seedance 2.0: Which AI Video Generator Is Actually #1? — head-to-head feature comparison

- 🧠 Inside HappyHorse 1.0: Architecture, Benchmarks, and Who Might Be Behind It — deep technical analysis