Disclosure: GPT-Image-2 was announced by OpenAI on April 16, 2026. Broad API access is still rolling out. This comparison is based on OpenAI's announcement, publicly reported Seedream 5.0 benchmarks, and early community reports on GPT-Image-2. GoIMG is not affiliated with OpenAI. GoIMG's GPT-Image-2 page runs on Seedream 5.0.

The Question

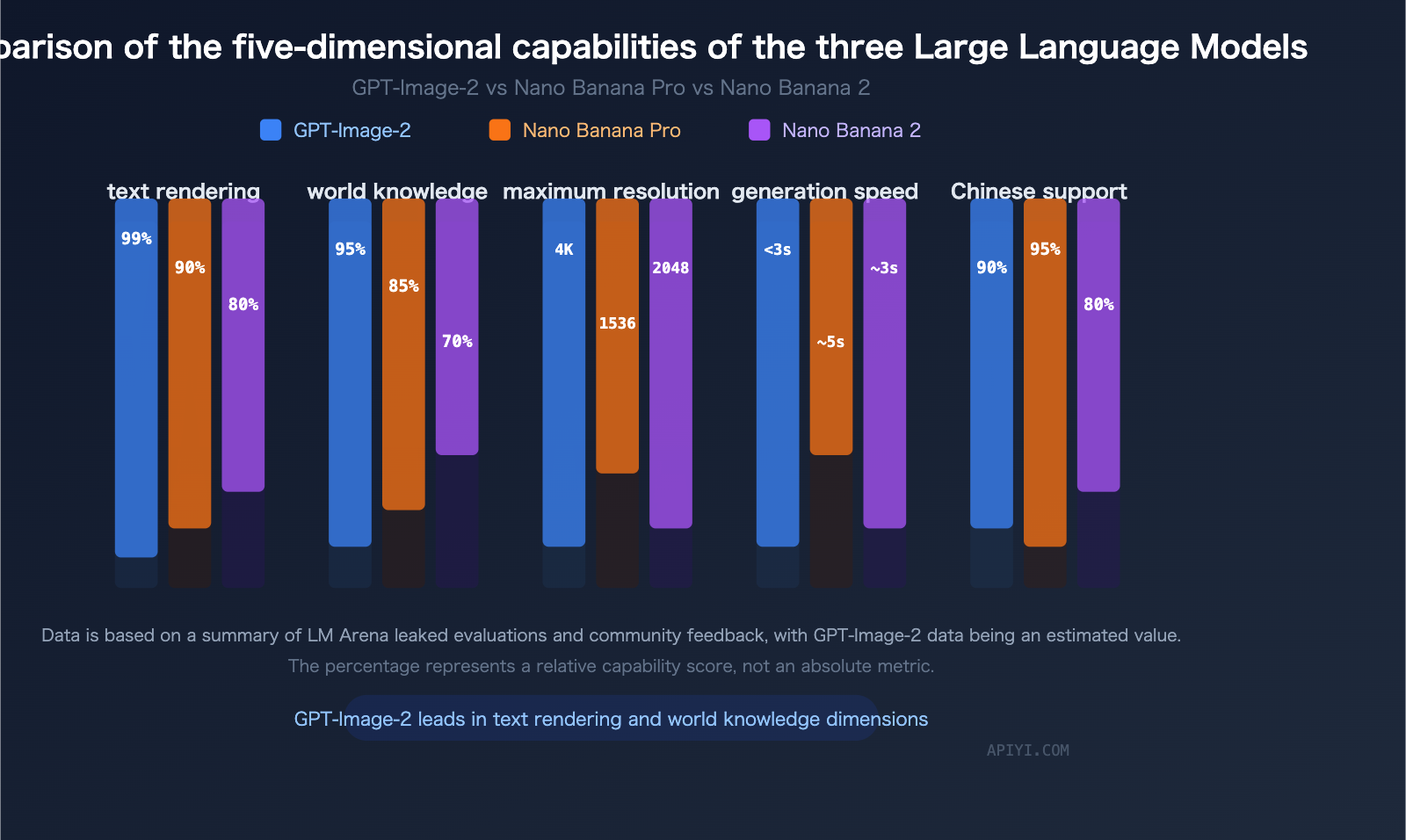

As of April 17, 2026, the two most credible candidates for "best production image model" are:

- GPT-Image-2 — OpenAI's new native multimodal image model, announced yesterday

- Seedream 5.0 — ByteDance's reasoning-augmented image model, shipping since late March 2026

They are built on fundamentally different philosophies, but they compete for the same user: creators and developers who need one model that reliably turns a precise brief into a usable image.

This article is the head-to-head.

TL;DR

| GPT-Image-2 | Seedream 5.0 | |

|---|---|---|

| Announced / released | Apr 16, 2026 | Late Mar 2026 |

| Architecture | Native multimodal transformer (autoregressive image tokens) | Reasoning-augmented diffusion with web retrieval |

| Max output | High-res (tier-gated by plan) | Up to 3K native |

| Text rendering | Best-in-class, including CJK / Arabic / Cyrillic | Strong, including non-Latin scripts |

| Reasoning | Integrated with GPT reasoning stack | Deep-thinking step before generation |

| Web-connected retrieval | Not explicitly advertised | Yes — first image model with live retrieval |

| Multi-image reference | Yes, composable | Yes, including before/after example pairs |

| Editing | Conversational edits, mask-free in context | Example-based edits (before/after teaches the edit) |

| Availability right now | Phased rollout — ChatGPT Pro + API in waves | Generally available, no waitlist |

| Use it today | If you have access | Try the GPT-Image-2 page on GoIMG |

If you stop reading here: for most creators on April 17, 2026, the one you can actually use is Seedream 5.0.

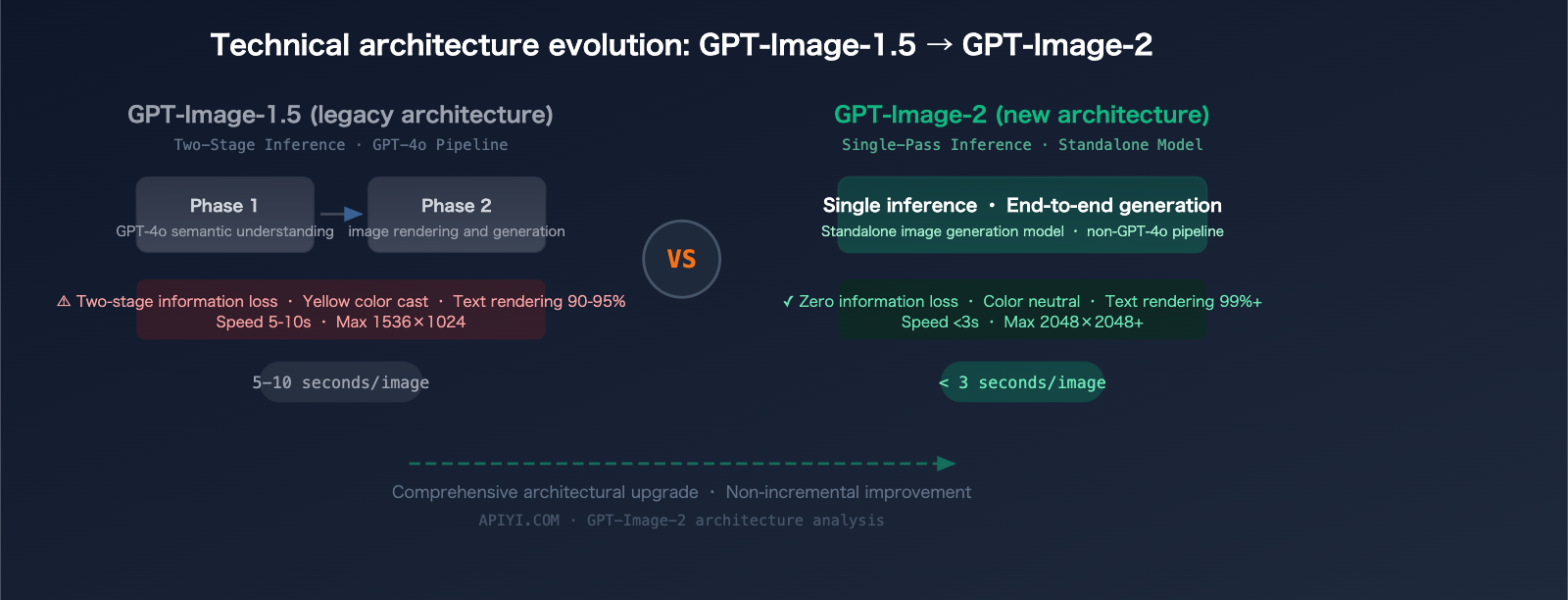

Round 1: Architecture

GPT-Image-2 is a native multimodal transformer. Image tokens are generated autoregressively by the same model that handles text, which is why its text rendering and instruction following are so tight — the language model is literally the image model. No diffusion sampler, no separate VAE hand-off, no fine-tuning gap between "understand the prompt" and "draw the thing."

Seedream 5.0 takes the opposite bet. It is a diffusion model with a reasoning layer — before the diffusion pipeline runs, a smaller reasoning module decomposes the prompt, resolves constraints, and plans a generation. This gives it a different kind of prompt fidelity: it excels at spatial reasoning, logical composition (weight distribution on a seesaw, correct reflections, physically plausible shadows), and structured multi-element layouts.

Both architectures now beat the "pure diffusion, no reasoning" baseline that dominated 2023–2025.

Edge: Too close to call on architecture alone. Different strengths.

Round 2: Text Rendering

This is the round GPT-Image-2 was built to win.

- GPT-Image-2 renders text at a level that effectively closes the historical failure mode of AI image generation. Headlines, captions, multi-line typography, small text, and non-Latin scripts all ship usable on a majority of first generations.

- Seedream 5.0 also handles text well — Seedream's entire 5.0 launch message was that text rendering is no longer a problem — but in side-by-side tests on the hardest cases (dense small text, precise kerning, complex scripts), GPT-Image-2 has an edge.

Edge: GPT-Image-2 by a modest margin, meaningful only at the extremes.

Round 3: Reasoning and Instruction Following

Both models are reasoning-aware. They approach it differently.

- GPT-Image-2 inherits reasoning from OpenAI's o-series / GPT-4.x stack. It thinks in natural language before emitting image tokens, which means long, multi-constraint prompts resolve coherently.

- Seedream 5.0 runs a discrete deep-thinking step ahead of diffusion. On spatial, logical, and physics-style prompts (seesaws, reflections, perspective) it is particularly strong — it's one of the few models that gets "four balls, the red one is heaviest, balanced on a seesaw with a cube" right first try.

Edge: GPT-Image-2 on linguistic / typographic instructions; Seedream 5.0 on spatial / logical / physical ones. Pick based on use case.

Round 4: Current-Events and Real-World Grounding

Seedream 5.0 advertises live web retrieval — it can pull current references from the internet to improve subject accuracy, trend awareness, and up-to-date cultural rendering.

GPT-Image-2 has not, in the announcement, explicitly claimed web-connected retrieval for image generation. Its world knowledge is strong via the underlying GPT model, but that knowledge has a training cutoff.

Edge: Seedream 5.0 for anything tied to this week's news, a trending style, or a just-launched product.

Round 5: Editing and Reference Inputs

- GPT-Image-2 supports conversational, in-context editing across reference images. You describe the edit; it happens.

- Seedream 5.0 pioneered example-based editing — give it a before/after pair and a third image, and the model infers the transformation and applies it. This is remarkably effective for batch edits, material swaps, style transfers, and cross-image consistency.

Both support multi-image reference for subject identity, style, and composition.

Edge: Different tools. If you already have a before/after pair, Seedream 5.0 is faster. If you want to describe the edit in natural language, GPT-Image-2 is more fluent.

Round 6: Availability (the round that matters)

This is where the comparison grounds out.

GPT-Image-2 (April 17, 2026):

- Live in ChatGPT for Pro subscribers

- Rolling out to the OpenAI Images API in waves

- Rate limits and access gates for the first few weeks

- No self-hosting, no weights, no local inference

Seedream 5.0 (April 17, 2026):

- Generally available, no waitlist, no access gates

- Accessible right now via the GPT-Image-2 page on GoIMG

- Up to 3K native output in the standard tier

- API access for developers

If you need to ship this afternoon, the comparison is over.

Should You Wait for GPT-Image-2?

If you already have API access: absolutely, start integrating and testing. The text-rendering and instruction-following improvements are genuine and will matter for any text-heavy visual work.

If you don't have access yet: don't stop working. Seedream 5.0 is within striking distance of GPT-Image-2 on most real-world tasks, exceeds it in a few (spatial reasoning, web retrieval, 3K native output), and costs nothing to try. When your GPT-Image-2 access lands, swap it in for the workloads that benefit and keep Seedream 5.0 for the rest.

Verdict

There is no single winner. There is a pair of models, each stronger on some axes, with different licensing and availability constraints.

Practically:

- For text-heavy design (posters, ads with copy, product labels): GPT-Image-2 if you have access.

- For spatial / physical / current-events imagery: Seedream 5.0.

- For today, for everyone, without a waitlist: Seedream 5.0 via the GPT-Image-2 page on GoIMG.

Related Reading

- 📰 GPT-Image-2 Is Here: OpenAI's Next-Gen Native Image Model, Explained — the launch story

- 🧠 Inside GPT-Image-2: How OpenAI's Native Image Model Likely Works — technical deep dive

- 🎨 Seedream 5.0: Next-Gen AI Image Creation with Deep Reasoning — what's actually shipping on GoIMG today