Disclosure: GPT-Image-2 was announced by OpenAI on April 16, 2026. OpenAI has not released model weights, a technical paper, or full architectural details. This article analyzes the announcement together with public knowledge of GPT-Image-1 (released in GPT-4o, March 2025) and OpenAI's broader multimodal stack. Specific numeric claims about GPT-Image-2 that are not directly stated by OpenAI should be treated as informed speculation. GoIMG is not affiliated with OpenAI.

Why This Model Matters

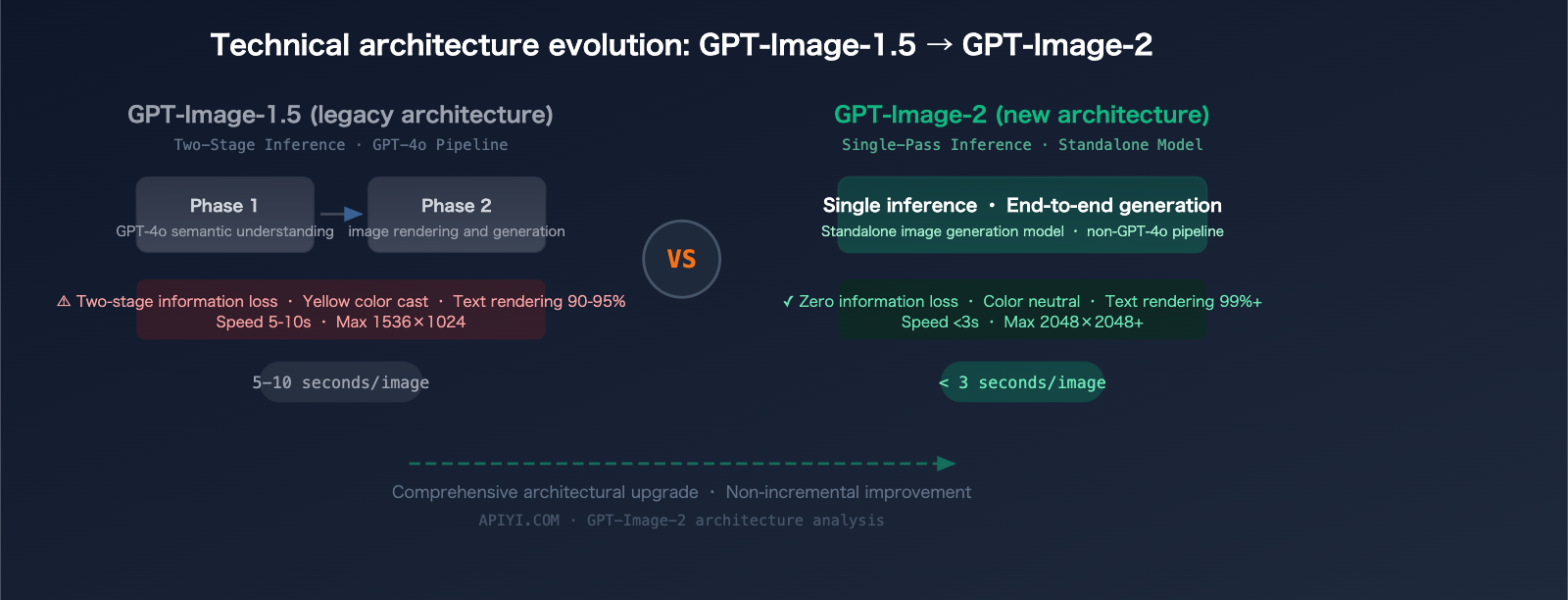

For most of the AI image era, "image model" and "language model" were different things glued together. You prompted a language model, something translated that prompt into a diffusion conditioning vector, and a U-Net or DiT sampled pixels. The language model didn't really see what it was asking for.

GPT-Image-1 broke that pattern in early 2025 by letting GPT-4o emit image tokens directly — no diffusion handoff, no separate sampler, one model doing both. Text rendering, which diffusion had struggled with for years, suddenly became nearly trivial. Instruction following got sharper.

GPT-Image-2, announced on April 16, 2026, is the logical next step in that lineage. This article walks through what the model almost certainly is — based on the GPT-Image-1 blueprint, the capabilities OpenAI called out in the announcement, and what OpenAI's broader research program has shipped in the intervening year.

The Architecture, Step by Step

1. Images as Tokens

At the core of the GPT-Image line is a simple idea: images are sequences of discrete tokens, just like text. A learned tokenizer (in GPT-Image-1, a VQ-VAE-style codec) compresses patches of pixels into discrete codebook entries. The transformer generates those tokens autoregressively; a decoder turns the tokens back into pixels.

This is the same trick that underpinned DALL-E 1 in 2021 — but scaled up and trained jointly with text and reasoning on a frontier-class base model.

| Component | Role |

|---|---|

| Image tokenizer | Compress image patches into discrete tokens |

| Text tokenizer | Standard BPE-style text tokens |

| Shared transformer | Consumes and produces mixed text + image token streams |

| Image decoder | Reconstruct pixels from generated image tokens |

For GPT-Image-2, the most likely axes of improvement are:

- Finer tokenizer — more codebook entries, smaller patches, higher effective resolution per fixed token budget

- Longer context — enabling higher final resolutions and more reference inputs

- Better joint training — more aggressive curriculum on text-rich images, reference/edit pairs, and multi-constraint prompts

2. Reasoning Enters Image Generation

The defining capability of OpenAI's 2025–2026 research has been reasoning — the o-series models, then the reasoning upgrades to GPT-4.x and GPT-5.x. The announcement for GPT-Image-2 implies that this reasoning machinery now runs before image tokens are emitted.

In practice, that probably means:

- The model receives the prompt

- Internally, it performs a reasoning pass — decomposing constraints, resolving conflicts, planning composition

- Then it emits the image token sequence conditioned on that plan

The end-user observation: complex multi-constraint prompts now behave the way GPT users have been trained to expect. Specific typography, layout rules, brand colors, and spatial instructions compose coherently rather than averaging into mush.

This is the same general move Seedream 5.0 made with its "deep thinking" pass, just implemented inside a unified autoregressive model instead of as a separate step ahead of a diffusion pipeline.

3. Why Text Rendering Works

Diffusion models render text badly for a structural reason: they don't model text as text. They model the visual statistics of pixels, and letters happen to be made of pixels. Without linguistic supervision over the pixels they produce, diffusion samplers have no inherent reason to respect letter shapes, kerning, or spelling.

Autoregressive image-token models do have that supervision. The same transformer that has been trained on trillions of tokens of text is the one emitting image tokens. Its prior over "letter shape in context" is enormous. That's why GPT-Image-1 beat every diffusion model on text rendering, and why GPT-Image-2 pushes the bar further — bigger base model, longer context, better tokenizer.

4. Editing and References as Token Streams

If images are token sequences, editing becomes natural: paste the original image as a prefix, describe the edit, and the model emits the edited image as the continuation. Reference images become additional prefix sequences. Multiple references → longer prefix. Before/after teaching pairs → a two-image prefix followed by a third image to transform.

This is why GPT-Image-2's editing and reference behavior is "composable" in a way diffusion inpainting isn't — there is no special mode for editing; editing is just a longer context window.

What OpenAI Specifically Claimed

From the April 16, 2026 announcement:

- Higher effective resolution at lower cost

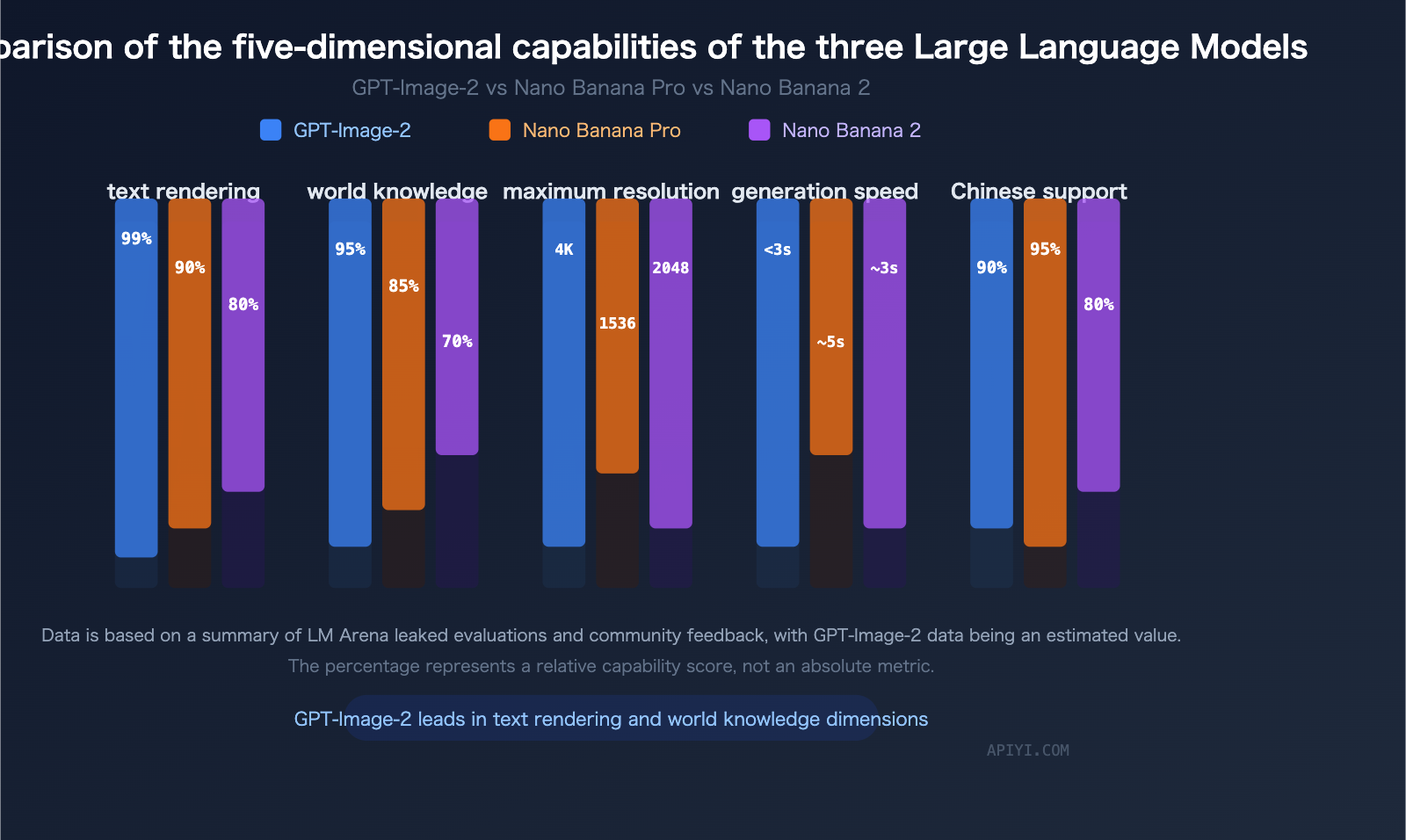

- Stronger text rendering across languages (including CJK, Arabic, Cyrillic)

- More faithful multi-constraint instruction following

- Improved edit and reference-image behavior

- Phased API rollout

What OpenAI did not claim:

- Web-connected retrieval (unlike Seedream 5.0)

- Open weights or on-prem deployment

- Specific benchmark numbers versus competitors

- A fixed GA date

Absence of a claim is not evidence of absence, but it is worth tracking. Web retrieval in particular is a real capability gap that affects any use case tied to recent events.

Where the Quality Gains Come From

If you trust the announcement, the quality improvements over GPT-Image-1 likely compose from four sources:

- Bigger, smarter base model — GPT-Image-2 almost certainly sits on top of a newer GPT reasoning model than the GPT-4o that shipped GPT-Image-1

- Better tokenizer — higher effective resolution per token, fewer visible artifacts, sharper small features

- Reasoning integration — multi-constraint prompts are now resolved before generation begins

- Longer context — more reference images, larger final outputs, more complex edit chains

None of these are new individually. The combination is.

Limits and Honest Caveats

A few things are worth saying plainly.

No weights. GPT-Image-2 is a cloud service. That is fine for product teams; it is a dealbreaker for research reproducibility, data residency, or sovereign deployment.

Phased rollout. Even users with Pro access will see rate limits and capacity-gated behavior for the first weeks. Don't plan a launch that depends on unrestricted throughput.

Benchmarks are not public yet. OpenAI did not publish benchmark numbers in the announcement. Side-by-side comparisons with Seedream 5.0, Imagen 4, and the frontier Chinese labs will take time to stabilize.

The diffusion side is not standing still. Seedream 5.0, Imagen 4, and several Chinese labs are shipping reasoning-augmented diffusion models that match GPT-Image-2 on many tasks today, beat it on some, and offer capabilities (web retrieval, 3K native output) that GPT-Image-2 does not advertise.

What to Do With This Information

If you're a researcher: watch for a tokenizer change. Most of GPT-Image-2's quality gain is almost certainly in the image token codec. When OpenAI publishes a paper or a model card — which may or may not happen — that will be the detail worth reading closely.

If you're a builder: the architectural lesson is that autoregressive image tokens + frontier reasoning models are now a serious competitor to diffusion on text-heavy, instruction-heavy, edit-heavy workloads. Diffusion is still ahead on pure photorealism and on anything that benefits from fast denoising distillation. Pick per workload.

If you're shipping product today: use what works today. The GPT-Image-2 page on GoIMG runs on Seedream 5.0 — a reasoning-augmented diffusion model that covers the same surface area for most real-world tasks, with 3K native output, web-connected retrieval, and no waitlist.

Related Reading

- 📰 GPT-Image-2 Is Here: OpenAI's Next-Gen Native Image Model, Explained — the launch story

- ⚔️ GPT-Image-2 vs Seedream 5.0: Which AI Image Model Should You Actually Use? — head-to-head comparison

- 🎨 Seedream 5.0: Next-Gen AI Image Creation with Deep Reasoning — what's actually shipping on GoIMG today